What Christianity Absorbed, Built, and Left Behind

People say this all the time.

That the West got its ideas about pluralism, tolerance, and liberty from Christianity. That without it, there would be no concept of human dignity, no rights, no freedom in the modern sense. And that if those things feel unstable now, the solution is simple: return to the source.

The claim that pluralism, tolerance, and liberty are direct inheritances of Christianity is not just oversimplified. It reverses the historical pattern.

In Part 1, I pushed back on the idea that Christianity “founded the West” in any clean or singular sense, or that returning to it offers an obvious path forward. In Part 2, I stepped back and looked at something more fundamental: the fragility of freedom itself. Not as an abstract ideal, but as a social order that depends on limits, restraint, and a population capable of sustaining it. More importantly, I looked at how quickly that order begins to break down when those conditions are no longer present.

Across the responses to both pieces, there was a shared sense that something is not working. Not just politically, not just culturally, but at a deeper level that is harder to name.

One way to make sense of that is to stop looking for a single cause and start looking at how the whole inheritance fits together.

Western civilization did not develop along one track. It emerged through multiple layers operating at the same time. At a minimum, those layers include institutions, culture, and psychology.

Institutions include law, political authority, and the distribution of power. Culture includes religion, tradition, identity, and shared meaning. Psychology includes the moral instincts people use to interpret the world: instincts tied to fairness, loyalty, authority, purity, harm, belonging, and threat.

For long stretches of time, those layers reinforced one another. Institutions reflected shared values. Cultural traditions gave meaning to authority. Moral instincts were channeled through forms of life that provided both order and legitimacy.

But that fit was never permanent.

When those layers begin to pull apart, the result is not merely disagreement. It is instability.

That is the backdrop for this final piece.

The goal here is not to argue that Christianity caused the West, or that it deserves credit for everything people now associate with Western civilization. It is also not to reduce Christianity to a purely destructive force. Both approaches distort the picture in different ways.

The same problem appears in the phrase “Judeo-Christian values.” This often creates the impression of a smooth and unified inheritance, when the actual history is far more fractured. Judaism and Christianity are related, but they are not interchangeable. Christianity did not simply preserve Jewish covenantal thought. It reinterpreted it, universalized it, and claimed fulfillment over it. A tradition rooted in a particular people, law, land, and covenant was recast as a universal message for all mankind.

This repositioning changed the role of religion entirely. It no longer sits alongside other domains. It began to judge them.

It loosened religion from peoplehood and place. It made belief itself the primary marker of belonging. And once belief becomes the primary boundary, disagreement takes on a different moral weight.

Today’s article will address the harder question:

What did Christianity reorganize, what did it scale, and what did it leave unstable?

Because Christianity’s real inheritance was not simply compassion, liberty, or dignity. It reshaped how belief, authority, identity, and moral obligation functioned at a civilizational level. It expanded moral language in ways that could operate across large populations, but it also introduced sharper boundaries between true and false belief, salvation and error, belonging and exclusion.

That combination, expansion on one side and constraint on the other, is where the inheritance becomes complicated.

SECTION I: THE GOOD

What Christianity Absorbed and Reorganized

Before getting into what Christianity actually contributed, it’s worth being clear about what is usually attributed to it.

A moral framework. Stable family structures. The unification of fragmented tribal societies into something resembling a shared civilization. A sense of cohesion strong enough to hold large populations together.

Those developments did happen. The question is where they came from…

Because none of those things begin with Christianity. They depend on something older: stability across generations, shared practices, inherited obligations, and a way of life that binds people before it explains itself.

That is what tradition is.

The word itself comes from the Latin traditio: a handing over, a passing down, something delivered across generations. But that definition only gets you so far. Tradition is not just a set of ideas preserved in texts or doctrines. It is lived. It shows up in habits, rituals, inherited gestures, seasonal rhythms, family patterns, and the quiet repetition of things people do not always stop to explain but continue to do anyway.

It exists in the structure of daily life.

You see it most clearly in how societies deal with death.

Long before Christianity became dominant in Europe, burial practices already reflected a deep sense of connection between the living and the dead. In the Stone Age, communities used mass graves in caves or pits. Later, megalithic cultures constructed communal tombs that anchored memory to specific places. Indo-European groups developed barrows and cremation practices that changed over time while preserving the same underlying logic.

The dead were not discarded. They were placed, remembered, and integrated into the ongoing life of the community.

Tradition, in that sense, is not something invented at a particular moment. It is something carried forward, shaped and reshaped over time without losing its original intention.

Christianity enters into that world rather than creating it from scratch.

What changes is not the existence of tradition, but its scale and its organizing thought.

Earlier religious life was largely tied to local identity: tribe, land, household, ancestry, city, and people. Christianity expands beyond that. It speaks in universal terms and builds a shared symbolic order that can operate across regions and populations that do not share the same lineage, gods, rituals, or customs.

That increases the reach of the moral imagination.

Concern no longer stops at the boundary of immediate belonging. It extends outward, attaching value to individuals beyond their role within a specific family, tribe, or city. Over time, that broader vision feeds into developments people now associate with the Western inheritance: ideas about dignity, education, care for the poor, moral responsibility, and obligation toward those outside one’s immediate circle.

But this is typically where the story gets oversimplified.

Those impulses did not originate with Christianity. Traditions within the Greco-Roman world had already developed forms of civic responsibility, philanthropy, patronage, public works, and mutual obligation. Grain distributions, civic benefaction, philosophical ethics, and local forms of duty were not Christian inventions.

But even the Greco-Roman world was not self-contained. It had already absorbed influences from older and neighboring civilizations (Egyptian, Mesopotamian, Anatolian, and Phoenician) through trade, conquest, and cultural exchange. As scholars like Martin L. West and Walter Burkert have shown, Greek thought itself was shaped in part by these eastern traditions.

The ancient world was not morally empty before the church arrived. It was already layered, interconnected, and carrying forward inherited forms of order, obligation, and meaning.

You can see this clearly in Stoic thought. Christianity is often treated as if it introduced universal human concern into a cruel and indifferent ancient world. Stoicism already spoke in universal terms. It could describe human beings as participants in a shared moral order and extend concern beyond tribe, city, or immediate kinship.

But the structure was different.

Runar Thorsteinsson’s comparison of Roman Christianity and Roman Stoicism helps clarify the distinction. Stoicism could speak of universal humanity without making moral belonging depend on conversion to a saving truth. Early Christianity, by contrast, carried a universal message while also drawing a sharper boundary around religious adherence. Its moral vision expanded outward, but it did so through a division between those inside and outside the saving order.

Christianity did not invent universal concern but it did reorganize it.

It took older moral instincts, philosophical ideas, Jewish inheritance, Roman scale, and local traditions, then bound them into a universal religious narrative. It gave those instincts a broader scope, a more unified story, and a more durable institutional form.

But expansion alone does not explain why a civilization holds together.

A social order lasts when it fits the way people already experience the world.

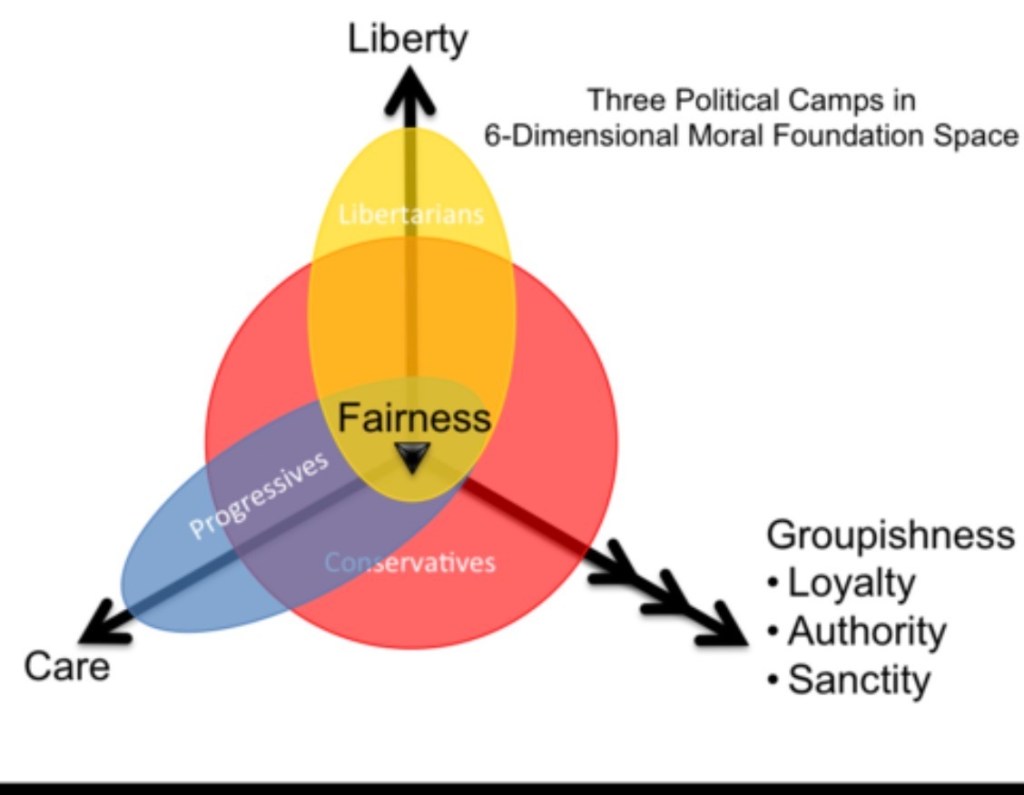

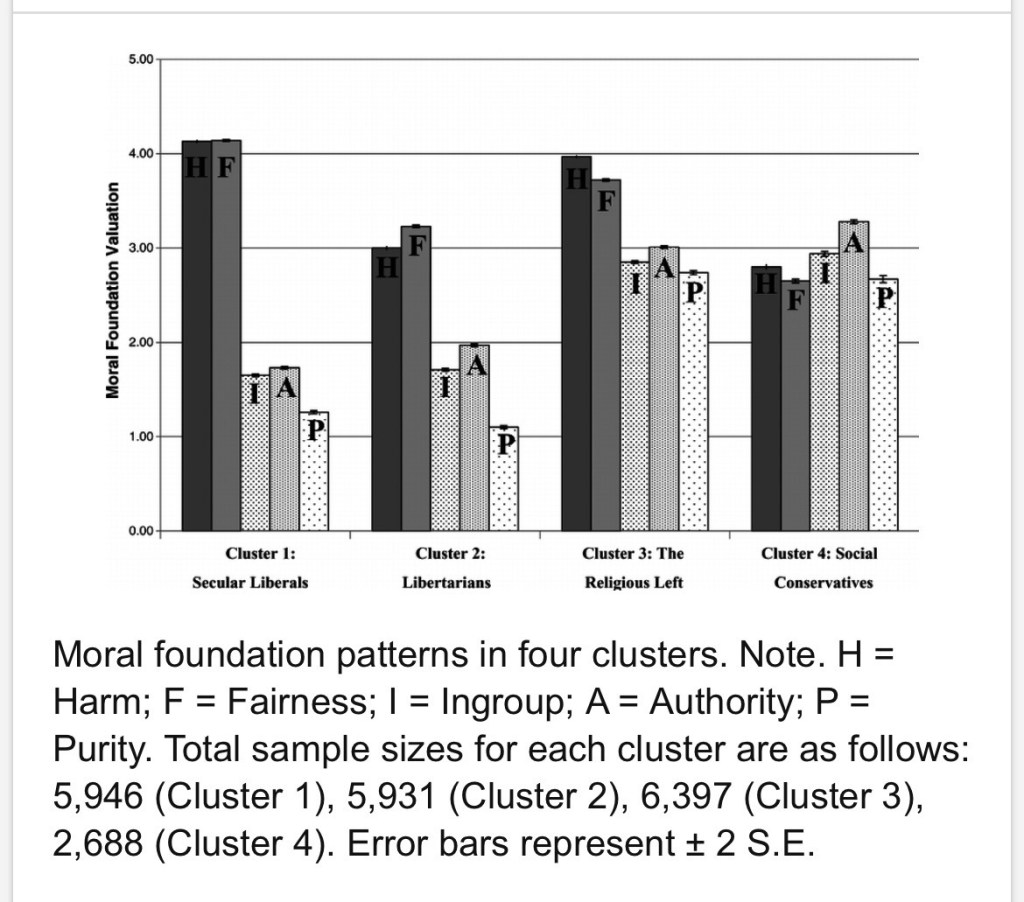

People do not move through life as detached rational observers. They respond through instinct: loyalty and betrayal, fairness and injustice, authority and rebellion, purity and contamination, belonging and threat. These instincts do not operate on their own. They cluster.

In more traditional societies, moral intuitions tend to reinforce one another. Care, fairness, loyalty, authority, and a sense of the sacred operate together rather than pulling apart. Even when people disagree, they often draw from the same underlying moral vocabulary when interpreting what is happening around them.

That shared moral vocabulary gives a society stability.

Christianity operated at that level.

It did not simply present moral rules. It gave instinct narrative form and placed it inside a larger story about meaning, suffering, hierarchy, obligation, sin, redemption, and ultimate reality. It offered a way of interpreting the world itself.

For people living in unstable conditions, where political authority could be inconsistent and survival uncertain, that kind of story organized experience. It offered coherence in a world that might otherwise feel random. It placed individuals inside a larger order and gave meaning to suffering, duty, death, and loss.

Once that fit took hold between cultural meaning, institutional power, and moral instinct, it became difficult to dislodge.

At the same time, Christianity did not remain completely closed off to innovative thought. Even within a religious order that emphasized authority and inherited truth, there were moments where that inheritance was tested from within.

Peter Abelard represents one of those moments.

His importance lies less in the drama of his life and more in the method he applied to truth itself. The intellectual world he entered was structured around inherited authority. Figures like Augustine were treated as settled voices, and the role of the student was often to understand, organize, and transmit what had already been established.

Peter Abelard with Book Giclee

Reasoning had a place, but it operated within limits. It was expected to clarify, not destabilize.

Abelard did not reject the tradition from the outside. He worked within it and exposed its internal tensions. In Sic et Non, he placed authoritative statements side by side in a way that made contradiction difficult to ignore.

If these sources were meant to provide certainty, why did they diverge so sharply?

If truth had already been handed down in a unified form, why did it fracture under comparison?

He treated those questions as a starting point rather than a threat to avoid.

“For it is from doubt that we arrive at questioning, and in questioning we arrive at truth.”

That quote represents the change in intellectual posture.

Instead of beginning with certainty and using reason to defend it, Abelard begins with tension and uses reason to work through it. Authority alone no longer settles the issue. Claims must be examined, language clarified, and assumptions tested.

Once questioning becomes legitimate, authority can no longer rely on transmission alone. It now has to also persuade.

Abelard pushed beyond accepted limits. He applied reason to doctrines often treated as beyond rational explanation and placed greater emphasis on intention in moral evaluation. In doing so, he opened space for a more nuanced understanding of ethics, one not entirely bound to inherited categories.

The response to him was what you would expect from institutional power.

He was condemned. His works were burned. He was brought before councils that were less interested in exploring his arguments and more so in containing their implications. The reaction showed what was at stake. A religious order grounded in authority does not easily absorb a method that legitimizes doubt.

And yet the method persisted.

Even when his specific conclusions were rejected, the habit of inquiry he modeled proved difficult to suppress. The practice of setting opposing views side by side and working through contradiction became central to scholasticism. The intellectual tradition that later shaped medieval universities carried forward elements of an approach once treated as dangerous.

Abelard does not stand alone as the cause of a broader intellectual reopening. The recovery of classical texts, the reintroduction of Aristotle, contact with Islamic and Jewish scholarship, and the growth of educational institutions all played a role.

What his story represents is the shift in attitude.

Inherited knowledge no longer functions as a sealed inheritance. It became something that can be examined, refined, and, within limits, challenged.

Of course, those constraints never fully disappeared.

Abelard was allowed to question, but not indefinitely. He was permitted to reason, but not without consequence. The same religious culture that made his work possible also defined where it had to stop.

That tension between authority and inquiry did not remain confined to intellectual life. It also carried forward into the institutions that developed over time.

The medieval university is one of the clearest places to see this pattern at work. Often treated as a distinctly Christian achievement, it grew out of a much broader mix of influences.

In Spain, Baghdad, and Cairo, Islamic schools, libraries, and observatories held resources far beyond anything available in much of Europe at the time. Arab, Jewish, and Christian scholars shared intellectual interests through expanding trade networks and translation movements. After the Christian capture of Toledo in 1085, that city became one of the key places where these worlds met, allowing texts to move across languages, traditions, and religious boundaries.

The Western reopening of inquiry did not happen because Europe simply looked inward and rediscovered itself.

It happened because knowledge traveled.

Averroes’ commentaries on Aristotle, translated into Latin, became essential sources for thirteenth-century Christian intellectuals, including Thomas Aquinas. That alone should complicate any idea that Christian scholarship developed in isolation. The university absorbed, translated, debated, and reorganized knowledge that had already passed through Greek, Arabic, Jewish, and Latin traditions.

Even the structure of medieval universities reflects that broader inheritance. They developed their own corporate identities, governed collectively by masters, with distinct curricula and examination systems. By the late thirteenth century, Master of Arts could vastly outnumber Master of Theology. Historian Charles Freeman notes one example where 120 teachers of the arts were listed against only 15 Masters of Theology. That imbalance tells you what mattered most. The curriculum leaned heavily on classical texts, not purely Christian foundations.

Christian Europe helped institutionalize learning, but the material being organized was older, broader, and more cosmopolitan than the church-centered story suggests. The university becomes another example of Christianity’s larger pattern: it absorbed existing goods, gave them institutional form, and placed them inside its own theological horizon.

But the results did not move in one direction.

The same religious vision that could support care and dignity could also justify hierarchy and control. Because the tradition depended on scriptural interpretation, and interpretation depended on authority, very different conclusions could emerge from the same source material.

That instability is not only a matter of later interpretation. It is already present in the texts themselves.

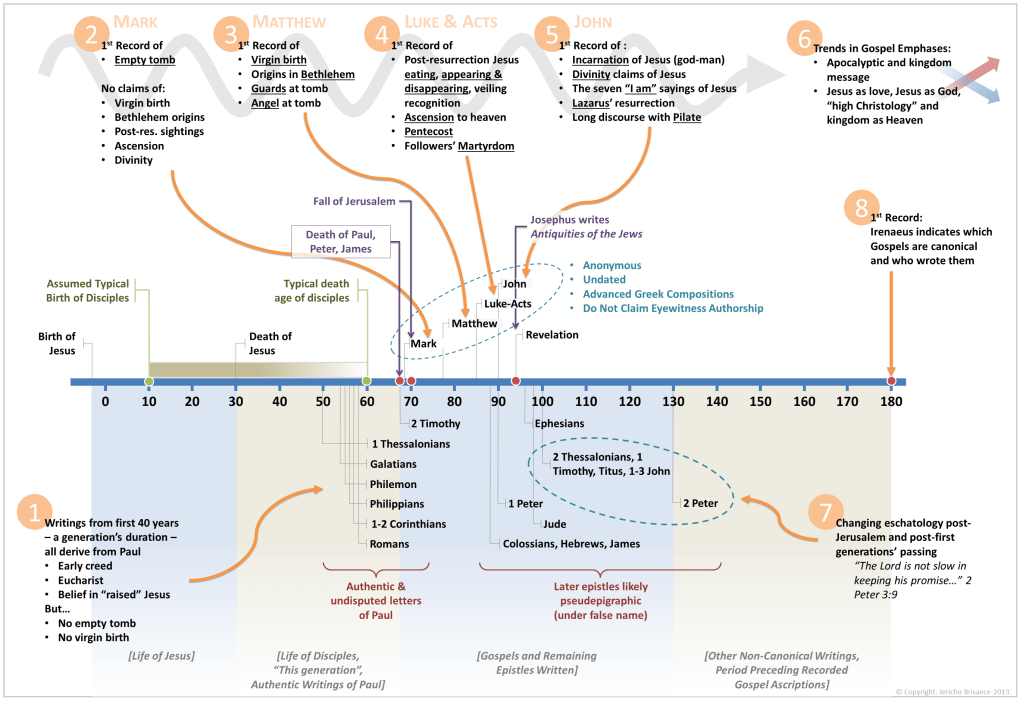

The Gospels do not present a single, unified account. They offer overlapping portraits that do not fully align.

In Gospel of Matthew and Gospel of Mark, Jesus cries out, “My God, my God, why have you forsaken me?” while in Gospel of John, he concludes, “It is finished.” The tone shifts from abandonment to completion.

The timeline shifts as well, with the Synoptic Gospels placing the final meal at Passover, while John places the crucifixion before it begins.

Even the ethical posture is not entirely consistent: in Matthew, Jesus teaches “turn the other cheek,” while in Luke, he tells his followers, “Let the one who has no sword sell his cloak and buy one.”

Taken together, these are not minor discrepancies. They open space for fundamentally different readings of what the tradition demands.

Christianity persists not as a fixed form, but as a tradition capable of producing multiple, competing forms while still claiming continuity.

This becomes especially clear in debates over slavery.

Christians were involved in abolition movements, and that history is part of the record. The language of universal moral equality played a real role in mobilizing opposition to slavery and reshaping moral expectations.

But that is not the whole story.

The same texts were also used to defend slavery, reinforce it, and argue that existing social orders were divinely sanctioned.

That contradiction is not incidental. It reveals something important about the Christian inheritance itself.

A religious order that combines universal moral language with authoritative texts creates the conditions for both expansion and constraint. It can push moral concern outward, but it can also bind that concern within approved categories. The outcome depends on who interprets the texts, which authorities prevail, and what social pressures shape the reading.

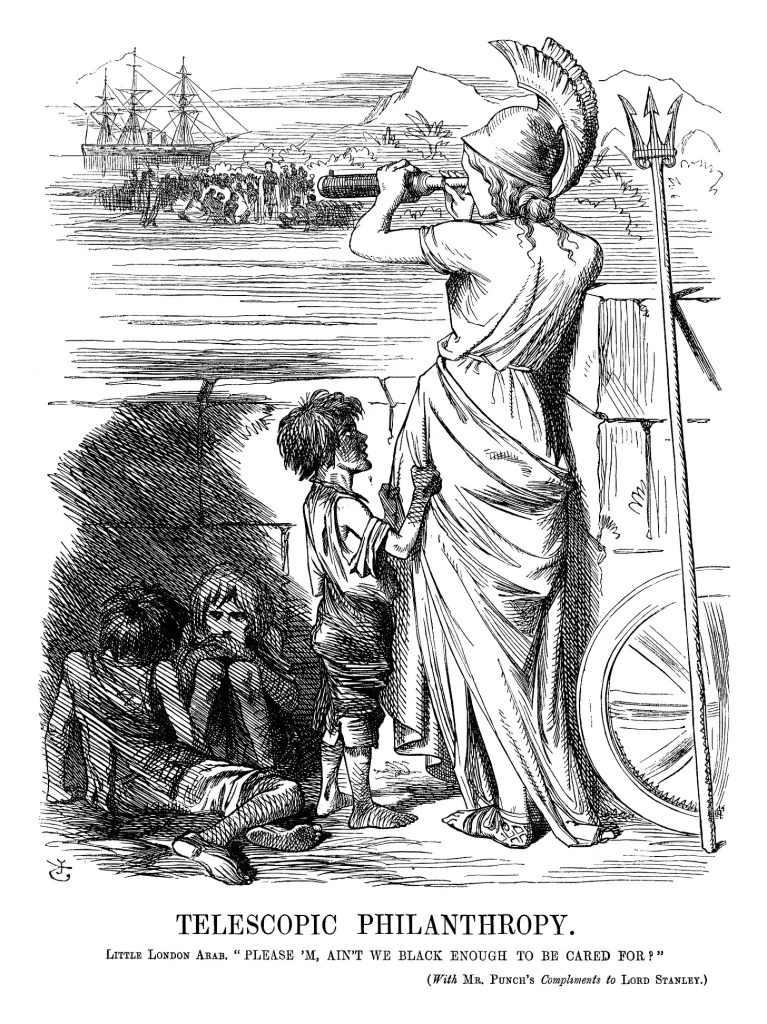

Critics of abolitionist movements, including Thomas Carlyle, argued that what they saw as abstract humanitarian concern could override more immediate obligations or practical realities. A contemporary political cartoon captured this dynamic under the phrase “telescopic philanthropy”—a tendency to focus moral concern at a distance while neglecting what is closer at hand.

The point I’m trying to make here is not that concern beyond one’s own group is inherently false or wrong.

The point is that moral expansion creates distance.

The farther a concern stretches, the easier it becomes to neglect concrete obligations close at hand: family, neighbors, local order, inherited duties, and the people one is actually responsible for. Abstract compassion can become morally flattering precisely because it asks less of the person expressing it.

Whether one agrees with those criticisms or not, they point to something very real.

A moral order that expands obligation beyond local belonging gains reach, but it also risks losing proportion. It can elevate the stranger while forgetting the neighbor. It can speak beautifully about mankind while failing the people right in front of it.

Christianity extended moral concern beyond tribe and built institutions that carried that vision forward. But it also introduced pressures around authority, interpretation, exclusion, and the limits of acceptable thought.

The good is real, but…so is the tension inside it.

Christianity’s inheritance was not simply compassion, dignity, or education. It was a moral architecture: universal in scope, institutional in form, inward in psychology, and unstable once detached from the cultural world that had once held it together.

That brings us to our next inquiry.

Not just what Christianity gave the West, but what kind of order made those outcomes possible.

SECTION II — THE BAD

Truth, Authority, and the Limits of Inquiry

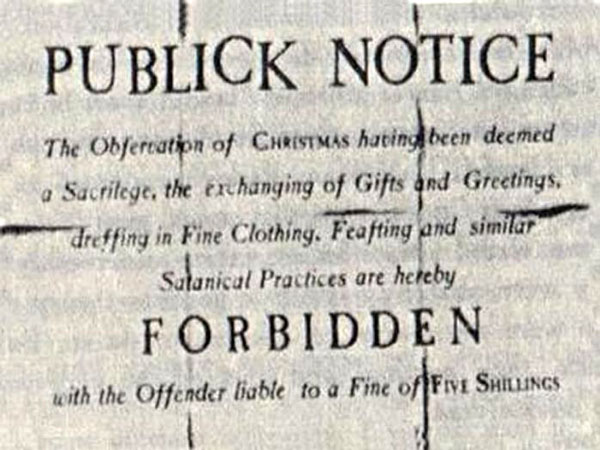

At this point, the issue is not simply what happened when Christianity moved from the margins to power. I’ve explored that elsewhere: the suppression of rival systems, the narrowing of acceptable thought, and the long habit of treating competing worldviews not as alternatives to debate, but as errors to contain.

The deeper question here is more structural.

What kind of religious order produces those outcomes in the first place?

Because the shift was a reorganization of how truth operated, how disagreement was handled, and how legitimacy was defined.

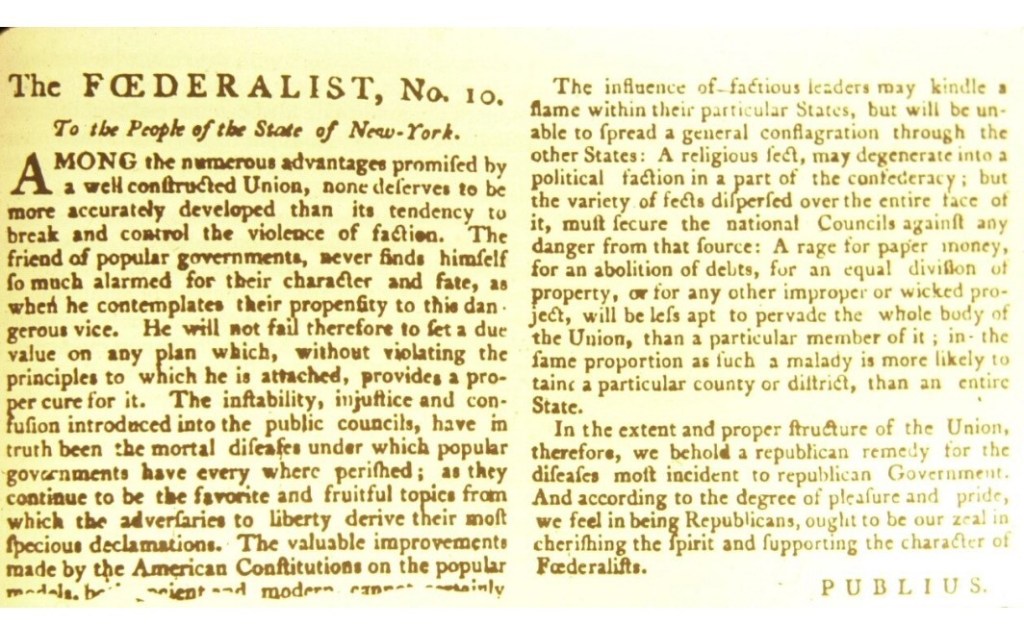

Earlier Greco-Roman religious and philosophical life was not tolerant in the modern sense, but it was more comfortable with multiplicity. Rival schools, local cults, household gods, civic rituals, and philosophical traditions could coexist without requiring one totalizing creed to absorb or eliminate the rest. That did not make the ancient world peaceful or morally pure. It did mean that truth was not always treated as one fragile object that had to be protected from every rival.

The Abrahamic worldview introduced something different, often called the “Mosaic distinction.”

It drew a sharper line between true and false in a way that changed the stakes of disagreement. Belief was no longer simply one option among many. It became a dividing line. Once that line was drawn, alternative ways of seeing the world did not remain neutral. They became errors, and error began to carry drastic consequences beyond private belief.

If truth is singular and binding, then the religious order has to decide what to do with everything outside of it. Some ideas are absorbed. Some are tolerated for a time. Others are pushed out entirely. But none of them sit comfortably alongside it anymore. They exist in tension with the claim that one truth must govern above all others.

As we previously discussed, Christianity is often credited with preserving learning and building universities, and that claim is not false. Medieval universities became important institutions for intellectual training, debate, law, theology, medicine, and philosophy. They helped organize knowledge and gave scholastic inquiry a durable form.

But that achievement has to be kept in proportion.

The medieval university was an achievement, but it was not a recovery of classical freedom. It was classical inheritance under theological supervision.

Ancient philosophy could be studied, but it had to be reconciled with Christian doctrine. Aristotle could return, but not as Aristotle alone. He had to be interpreted through Christian categories, corrected where necessary, and placed beneath revealed truth. Reason was permitted, even sharpened, but it was not sovereign.

The medieval university did not represent inquiry on open ground. It represented inquiry inside boundaries. Reason could clarify doctrine, defend doctrine, organize doctrine, and reconcile contradictions within inherited authorities. But when reason pressed too far against the architecture of belief, the limits became quite visible.

That does not make medieval learning worthless. It makes it conditional.

And that conditionality is the point.

Christian Europe did not simply preserve the classical world. It received it, edited it, baptized it, and constrained it. What could be made useful to the Christian order survived more easily. What threatened that order did not.

This is the kind of intellectual narrowing later critics would recognize in Christianity’s relationship to philosophy. Heidegger’s critique of onto-theology is not aimed at Christianity alone, but it helps name the pattern: open-ended questioning becomes absorbed into a prior explanatory order. Instead of wonder remaining primary, inquiry is routed through established claims about creation, causality, divine order, sin, and salvation.

The question is no longer allowed to remain fully open.

It has to be answered inside the architecture of doctrine.

Once orthodoxy is established it operates within boundaries that have already been set, and stepping outside those boundaries starts to carry not just intellectual consequences, but social ones. Access to authority, education, and influence becomes tied, at least in part, to alignment.

At that point, belief is no longer just something people hold. It becomes something that moves outward, seeking to correct and expand.

SECTION III: THE UGLY

Universalism, Power, and the Moral Afterlife

By the time you reach the modern West, the question is no longer whether Christianity shaped it. That much is obvious. The deeper issue is what, exactly, it left behind, and what happens when the conditions that once sustained that inheritance begin to unravel.

Christianity did not simply introduce a set of beliefs and then fade as those beliefs weakened. It reorganized moral life at a level that persists long after doctrine loses its authority. It changed how individuals understood themselves, how they related to others, where moral responsibility resided, and how truth was expected to move through the world.

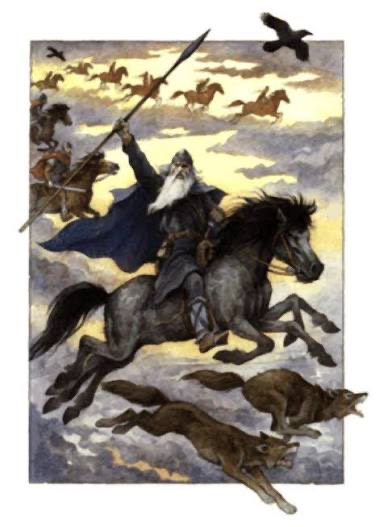

The ugly side of the Christian inheritance is not merely universalism. It is universalism with a missionary engine.

Christianity does not simply say, “This is true.” It says truth must be spread. Error must be corrected. The world must be brought into submission to the saving order. That structure changes the meaning of difference. A rival worldview is not merely foreign, local, or ancestral. It becomes spiritually demonic.

And once a difference becomes an error, correction can be justified as mercy.

The religious world Christianity emerges from was already in tension with the surrounding Greek and Roman order. Second Temple Judaism didn’t simply blend into Hellenistic life. Again and again, it resisted it—politically, culturally, religiously.

D. H. Lawrence saw this tendency clearly. In Apocalypse, he describes a fear-driven impulse within Christianity—a refusal to leave other ways of understanding the world intact. Not just disagreement, but the drive to overcome, absorb, or eliminate what stands outside the truth.

That instinct is already embedded in the apocalyptic world Christianity emerges from. Second Temple Judaism carries expectations of final judgment, cosmic conflict, and the ultimate victory of a single, rightful order-the coming of the Moshiach/Messiah.) Christianity inherits that framework and gives it a wider reach.

That is where Christianity’s relationship to Rome becomes essential. Christian universalism did not spread on its own. It moved through the late imperial Roman systems: roads, cities, law, administration, literacy, political centralization, and habits of governance already trained toward scale. The faith did not merely conquer Rome. It also inherited Rome’s machinery.

Rome gave Christianity infrastructure. Christianity gave Rome a sacred moral horizon. Together, they helped produce a form of power that could move across peoples, lands, languages, and customs while claiming to operate in the name of truth rather than mere domination.

This is also why Christianity receives too much credit for goods it did not invent.

One reason it’s treated as the source of Western morality is that it became dominant enough to absorb older goods and narrate them backward as Christian achievements. Care for the poor, philosophical inquiry, civic duty, moral discipline, education, and concern for the common good did not appear out of nowhere when Christianity entered history. Many of these were already present in Greek, Roman, Jewish, and local European worlds. Christianity reorganized them inside its own story.

That reorganization gave them reach.

But it also gave them a new master narrative.

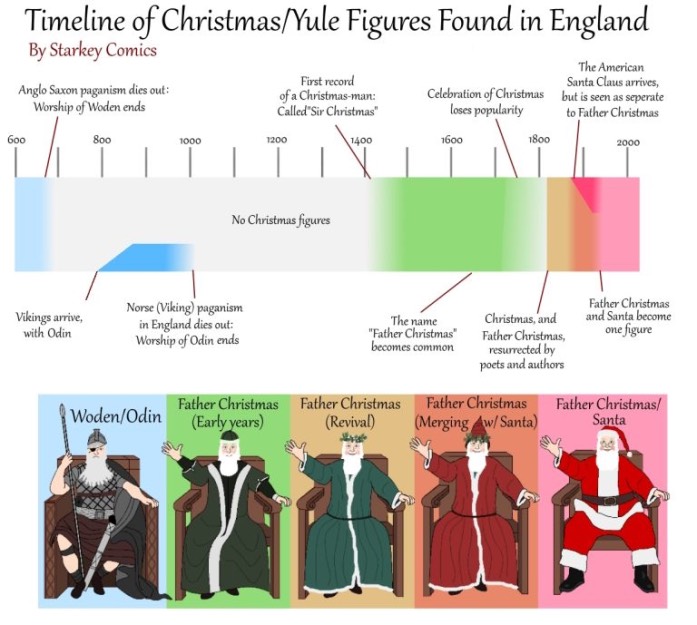

Older traditions were often embedded in particular peoples, places, households, ancestors, cities, gods, calendars, and sacred landscapes. Religion was not just a private belief system. It was woven into the life of a people. Christianity altered that relationship by making belief portable. It could cross borders, override local cults, and create a community defined less by blood, land, or inherited custom than by shared confession.

That is one of the most consequential shifts in Western history.

Christianity weakened the older link between people, place, ancestors, and gods. It did not erase those attachments overnight, and in practice it often absorbed local festivals, sacred sites, and folk customs. But the deeper logic changed. The highest belonging was no longer rooted primarily in the local or ancestral. It was relocated into a universal religious identity.

Conversion, then, was not merely persuasion. It was the remaking of belonging.

A people could be separated from their gods, their rituals, their inherited calendar, their sacred places, and their ancestral memory, then folded into a new universal story that claimed to redeem them while also replacing the world that formed them.

Not every conversion was violent. That would be too simple. Some conversions were gradual, political, strategic, sincere, blended, or partial. But once that universal truth claim became tied to salvation, rival traditions do not remain equal neighbors. They become obstacles to be overcome, errors to be corrected, or remnants to be absorbed.

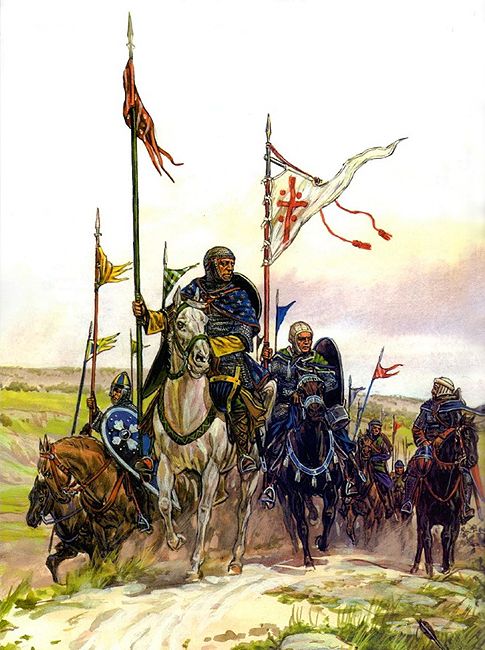

The crusades make this structure visible in its most explicit and militarized form.

They were not only political wars. They were religious wars shaped by sacred geography, penitential promise, and the belief that violence could be folded into a redemptive order.

The Crusades did not simply mobilize Europe—they redirected it toward Jerusalem, a sacred center that was not its own.

That does not mean every participant had the same motive, and it does not mean politics, land, wealth, status, and military ambition were irrelevant. Of course they mattered. But the crusading imagination reveals something specific: once warfare is placed inside a sacred story, conquest can be interpreted as obedience, purification, defense, or salvation.

That is the danger of missionary structure joined to power.

It sanctifies expansion.

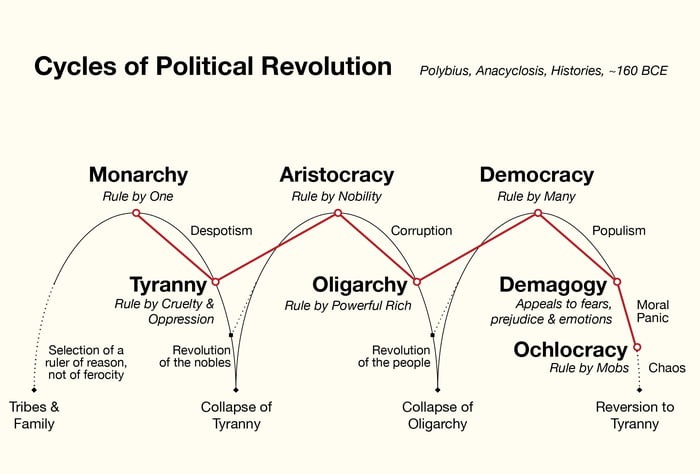

And this is not confined to medieval history. The same basic pattern can reappear whenever politics inherits religious moral intensity. The opponent is no longer merely wrong about policy. He becomes a threat to truth, justice, salvation, progress, safety, democracy, equality, or whatever sacred term now carries the old theological weight.

At that point, disagreement becomes harder to contain.

The modern West inherited this moral intensity even as explicit Christian authority declined. Most people inherited a world in which Christianity had already begun to lose its grip, but nothing fully replaced it. The rituals became optional. The authority fractured. Yet many of the underlying assumptions remained intact.

What had once been explicitly theological was gradually translated into secular terms.

At the center of that structure is a form of universalism Christianity helped entrench: the idea that all people stand beneath one moral order, that identity is secondary to a broader human category, and that truth applies universally rather than locally. That assumption did not disappear with religious decline. It migrated.

Liberalism, in many of its modern forms, carries that template forward: the individual abstracted from place, lineage, inherited duty, and thick communal belonging, then positioned inside a universal framework of rights, equality, and moral expectation.

The language changes. The structure does not.

The West moved from Christian universalism to liberal universalism without seriously interrogating the universalism itself. It replaced theological justification with philosophical or political justification, but it retained the assumption that the highest moral order transcends particular identities rather than emerging from them.

And what carries forward is not only universal morality, but missionary mentality.

Salvation becomes progress. Sin becomes injustice. Heresy becomes hate. Evangelism becomes activism. The world must still be corrected. The morally backward must still be brought into line.

And the irony is hard to miss. The same people who pride themselves on rejecting religious dogma often reproduce its structure almost perfectly—moral certainty, heresy-hunting, and the impulse to correct and convert, just without calling it religion.

You can see this most clearly in the modern left, especially in its activist and radical edges. What presents itself as political theory often behaves like secularized salvation mythology. The infrastructure is unchanged: the world is broken and the masses need liberation. God is removed, but everything else remains. The heretics still need correction. Sin becomes hierarchy. Salvation becomes self-rule. The missionary doesn’t disappear—he just changes form.

It still sorts people into the righteous and the condemned. It still creates moral taboos. It still treats disagreement as contamination. It still imagines that the world can be redeemed if only the right moral order is imposed—with enough force, shame, education, policy, or institutional pressure.

That is not the absence of Christianity.

It is part of its afterlife.

Later European expansion, and even modern geopolitical projects, often operate within the same structure—intervention framed as liberation, reform, or progress.

Whenever universal moral claims are aligned with power and tied to the belief that truth must spread, action begins to feel necessary rather than optional.

To understand why it persists, and why it adapts so easily across different historical contexts, you have to look at what is happening at a deeper level. Not just in institutions or empires, but within the individual.

Because the most enduring change Christianity introduces is not only institutional.

It is psychological. It altered where morality is located.

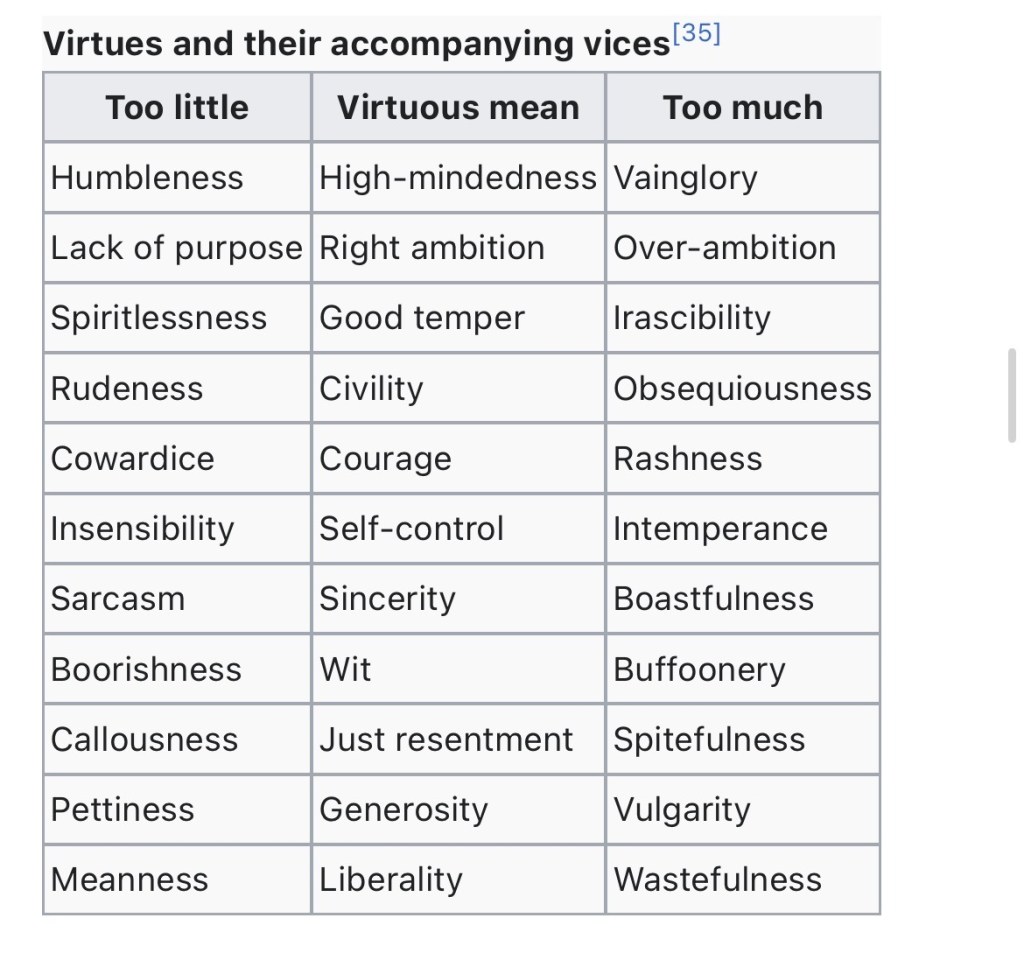

In earlier classical traditions, especially in Aristotle, the moral life is oriented outward. The Greek conception of eudaimonia assumes that human beings can develop toward excellence. Flourishing is cultivated through practice, discipline, rational activity, and participation in the world. Character is formed through what one does, and the moral life is outward, embodied, and lived over time within a shared civic and social context.

Christianity, especially through Augustine of Hippo, redirects that focus inward.

The problem is no longer simply what a person does, but what a person is. Human nature itself becomes suspect, marked from the beginning. The doctrine of original sin reframes the individual not as someone developing toward excellence, but as someone starting already compromised. This is not just about isolated wrongdoing. It is about a baseline disorder built into human existence, transmitted across generations, shaping inclination before any conscious choice is made.

From that premise, morality reorganizes itself accordingly. If the problem lies within, then moral evaluation cannot remain limited to outward behavior. It extends inward, into thought, desire, intention, and impulse—the parts of life no one else sees but are still treated as morally significant.

This becomes structured into daily practice. Monastic traditions classify internal states (temptation, pride, doubt, desire) as if they were items that could be named, tracked, and corrected. Authority expands beyond regulating behavior into defining what counts as acceptable thought, shaping not just action but the boundaries of the inner life itself.

Once it relocates inward, the primary site of regulation is no longer only the community. It is the individual mind, where conscience, guilt, confession, fear, and self-regulation operate continuously, often without any visible external enforcement.

You can see the implications of this in the conflict between Augustine and Pelagius. Pelagius emphasizes human capacity: the ability to choose, improve, and take responsibility for moral development. Augustine rejects that position, insisting on dependence—on God’s grace, on divine intervention, on something beyond human effort.

This is not only a theological disagreement.

It is also a question about agency.

If the individual cannot fully rely on their own capacity to move toward the good, then moral development becomes entangled with God’s authority. Responsibility does not disappear, but it no longer stands on its own. It becomes mediated, conditioned, and in some cases limited, as the individual is situated within a framework that places ultimate transformation outside of purely human reach.

Over time, that tension begins to shape intellectual life as well. Historians like Charles Freeman do not argue that inquiry simply disappeared, but that the conditions surrounding it changed. When belief becomes tied to salvation, and when error carries not only intellectual but spiritual consequences, curiosity itself begins to look different. Questions are no longer neutral exercises. They take on moral weight, and in certain contexts, they begin to carry risk.

Writers like Thomas Paine noticed this and pushed directly against the idea that truth can rest on inherited authority. In The Age of Reason, Paine argues that revelation, once it passes through human hands, can no longer function as unquestionable truth. What begins as divine claim becomes human interpretation, and therefore something that must be examined rather than simply accepted. That move cuts directly against the structure that treats questioning as risk. It reopens the possibility that belief itself should be subject to the same scrutiny as anything else.

Mark A. Noll describes a similar pattern in later Christian intellectual culture: a tendency to preserve belief rather than extend it. Questioning is not always welcomed as curiosity. It can be interpreted as disloyalty, a sign that alignment is weakening rather than deepening. The safest position, in that environment, becomes one of conformity rather than exploration.

The obedient mind is the secure mind.

This is not new. It is already visible earlier in the tradition. The same system that could produce figures like Abelard (where questioning began to reopen) also produces the conditions Noll is describing, where belief becomes something to preserve rather than extend.

The instinct to monitor thought, to moralize disagreement, to treat deviation as more than error—those habits do not emerge in a vacuum. They develop within specific historical conditions, and they persist even as the surrounding language changes.

This is why the internal reorganization matters.

It is not only about doctrine.

It is about how individuals learn to relate to themselves.

If Augustine relocates morality inward, Protestantism amplifies and personalizes that shift. The individual is placed in more direct relation to truth, expected to read, interpret, examine, and align himself without the same mediating structures that once guided that process. Authority does not vanish. It becomes more diffuse and more demanding.

The church hierarchy may weaken in some places, but new pressures emerge through scripture, sermon, household discipline, community surveillance, literacy, and conscience. The individual is made more responsible before God, but also more exposed.

The burden of interpretation moves further into the self.

Over time, that inward structure detaches from the communal and cultural worlds that once gave it shape. What remains is a society of individuals expected to interpret, justify, and regulate themselves inside a universal moral order, but without a shared culture capable of holding that process together.

That misalignment becomes visible in how people interpret conflict, identity, history, and political life.

In modern America, this can still be seen in forms of biblical literalism, dispensationalism, and end-times prophecy that shape how many Christians understand Israel, war, nationhood, and world events. These beliefs do not remain private. They influence political imagination. They affect how people interpret history, alliances, enemies, and what they believe is inevitable or divinely sanctioned.

In this context, belief stops being just belief. It starts shaping how everything else is seen.

That is the same mechanism operating in another key. The pattern that once defined orthodoxy and constrained variation does not disappear. It adapts as the cultural environment shifts. The language evolves, but the underlying habit remains… truth is singular, error is dangerous, and those outside the moral order must be corrected, converted, contained, or cast out.

What this reveals is not a simple story of progress or decline.

Christianity did not leave behind a stable moral foundation that the West either followed or abandoned. It left behind a set of interacting pressures: universalism and particular identity, internalized morality and external authority, individual responsibility and collective order, compassion and conquest, salvation and exclusion.

For a time, those pressures could be held in relative balance, but this fit no longer holds.

The institutions remain, but they no longer command the same trust. The moral instincts remain, but they are no longer guided by a shared tradition. The universal language remains, but it floats above increasingly fractured peoples, places, and loyalties.

Conflict becomes more moralized. Disagreement becomes harder to contain.

This is why the modern West feels both thin and volatile.

Thin, because inherited forms of continuity have weakened.

Volatile, because the moral pressure embedded in the inheritance remains, now operating without the older structures that once gave it proportion.

That is the condition the modern West has inherited.

CONCLUSION: Why the West Still Cannot Escape the Problem

The Christian inheritance of the West cannot be reduced to either gratitude or resentment.

It gave moral concern, meaning to suffering, durable institutions, and the preservation and transmission of knowledge, even as that knowledge was filtered through doctrine. It created a shared moral vocabulary capable of binding large populations together.

But it also changed the terms of belonging.

It loosened religion from peoplehood, place, ancestry, and local custom. It made belief portable. It turned truth into something singular and binding, making disagreement morally charged. Once rival traditions became errors rather than neighbors, the pressure to absorb, correct, or suppress them followed naturally.

The West did not abandon Christianity so much as carry its habits forward. The missionary impulse remained. The abstract individual remained. The suspicion of rooted identity remained. Social Justice became their new end times.

That is why a return to Christianity does not solve the problem. It would not restore a stable foundation but reassert one layer of the inheritance while leaving its tensions unresolved.

Secular liberalism does not solve it either. It often preserves the universalism while stripping away the cultural limits that once gave it proportion, asking people to live as abstract individuals inside a moral framework detached from place, memory, and inherited obligation.

What remains is not a coherent worldview, but a contradictory one.

From the beginning, the inheritance carried competing impulses. Early Christianity emerged from an apocalyptic environment while also developing moral and institutional frameworks for life within the world. Over time, those tensions were not resolved but reworked and emphasized in different ways.

Within Protestantism alone, some strands treated the world as something to be ordered and reformed, energizing movements like abolition, while others emphasized its corruption and eventual end, orienting life toward endurance and escape. The divergence is not a break from the tradition, but a difference in emphasis within it.

The result is a system that can point in opposite directions while still claiming the same foundation.

This is not a foundation a civilization can stand on.

A civilization needs moral scale, but also proportion. Compassion, but not so abstract that it forgets its own people. Rights, but not detached from duty. Inquiry, but not subordinated to sacred certainty. Space for disagreement, but enough shared identity to keep it from becoming civilizational warfare.

Above all, it needs rooted obligations.

A civilization cannot survive on abstract principles alone. It needs loyalty, shared memory, boundaries, place, and a people capable of recognizing what is theirs to preserve.

Because removing structure does not remove power. It removes the forms that make power visible and accountable. And when that happens, power does not disappear. It shifts—into forms that are harder to see and harder to resist.

We are not standing outside this inheritance.

We are still working within it.

And the task is not to romanticize Christianity, completely demonize it, or pretend we have escaped it, but to understand what it absorbed, what it built, what it destabilized, and what it left behind clearly enough to stop repeating its most destructive patterns.

Sources

Abelard, Peter. Sic et Non.

Aristotle. Nicomachean Ethics.

Aristotle. Politics.

Arktos Journal and Laurent Guyénot, “The Crusading Civilisation: From the Middle Ages to the Middle East” (Substack, April 3, 2026).

Atkinson, Kenneth. “Judean Piracy, Judea and Parthia, and the Roman Annexation of Judea: The Evidence of Pompeius Trogus.” Electrum 29 (2022): 127–145. https://doi.org/10.4467/20800909EL.22.009.15779

Augustine. Confessions.

Augustine. The City of God.

Brown, Peter. Augustine of Hippo: A Biography. Berkeley: University of California Press, 2000

Burkert, Walter. The Orientalizing Revolution: Near Eastern Influence on Greek Culture in the Early Archaic Age. Cambridge, MA: Harvard University Press, 1992.

Carlyle, Thomas. “Occasional Discourse on the Negro Question.”

Doner, Colonel V. “Cognitive Dissonance of Political Activists, Or Whatever Happened to the Religious Right?” Chalcedon, July 1, 1999.

Freeman, Charles. The Closing of the Western Mind: The Rise of Faith and the Fall of Reason. London: Heinemann, 2002.

Freeman, Charles. The Reopening of the Western Mind: The Resurgence of Intellectual Life from the End of Antiquity to the Dawn of the Enlightenment. London: Head of Zeus, 2023.

Lawrence, D. H. Apocalypse. 1931.

Locke, John. A Letter Concerning Toleration. 1689.

MacCulloch, Diarmaid. Reformation: Europe’s House Divided, 1490–1700. London: Allen Lane, 2003.

MacMhaolain, Aodhan. The Transmission of Fire: How To Keep Tradition Burning. The Enchiridion, April 9, 2026.

Montesquieu, Charles de Secondat. The Spirit of the Laws. 1748.

Noll, Mark A. The Scandal of the Evangelical Mind. Grand Rapids: Eerdmans, 1994.

Paine, Thomas. The Age of Reason. 1794–1807.

Paine, Thomas. Common Sense. 1776.

Thorsteinsson, Runar M. Roman Christianity and Roman Stoicism: A Comparative Study of Ancient Morality. Oxford: Oxford University Press, 2010.

West, Martin L. The East Face of Helicon: West Asiatic Elements in Greek Poetry and Myth. Oxford: Clarendon Press, 1997.