What Liberty Actually Depends On

Hey hey, welcome back to Taste of Truth Tuesdays. Today’s episode is where we dig into philosophy, culture, history, and the ideas that have shaped the world we’re living in—everything from classical texts to the American founding documents that are still very much relevant to how we should think about freedom today.

Listen here:

There’s a growing sense that something isn’t working.

You see it in the fragmentation of identity, the erosion of shared norms, and the breakdown of trust across institutions.

You don’t have to look very hard to notice it.

People don’t trust elections, medicine, or the media—sometimes all at once, and often for completely different reasons.

Dating is “freer” than it’s ever been, and yet it feels more unstable, more transactional, and more confusing than most people expected.

Corporations speak like moral authorities, issuing statements about justice and truth, while operating through incentives that have nothing to do with either.

Everything is still functioning. But less of it feels legitimate.

In my last piece, I traced one part of this problem back to a common assumption, that Christianity built the foundations of the West. But when you actually follow the development of those ideas, much of what we associate with Western thought—natural law, reason, and the structure of political life—has deeper roots in the Greco-Roman philosophical tradition.

That matters, because the frameworks we inherit shape what we think freedom is, and what we expect it to do.

This piece is a continuation of that question. Not only about where those ideas came from, but about what they require to hold together.

Because a free society doesn’t sustain itself on freedom alone. It depends on discipline, restraint, and a shared understanding of limits—conditions that the system itself cannot produce.

And when those begin to erode, the system doesn’t just break. It follows a pattern that’s been observed for a very long time.

I. The Fear Beneath the Founding

This isn’t a new problem.

The relationship between freedom and instability shows up wherever societies try to govern themselves.

The American founding emerged out of that concern. The people designing the system weren’t just thinking about how to create liberty, they were trying to understand why it collapses.

The colonists weren’t casually referencing Rome. English translations of Vertot’s Revolutions that Happened in the Government of the Roman Republic (1720) were in almost every library, private or institutional, in British North America. They studied how free societies decay, how power shifts from shared trust into something self-serving, and how internal corruption (not just external threat) brings systems down.

They believed they were watching it happen in real time.

What they took from antiquity was not blind optimism about freedom, but caution.

And this wasn’t limited to classical history. As Bernard Bailyn observed, the colonists were immersed in dense and serious political literature, shaped by philosophy, and sustained reflection on the problem of power.

Part of what they were working with was an older line of thought running through Greek and Roman philosophy.

The idea that human life is not directionless. That there are patterns to how people live, and that some ways of living lead to stability and flourishing, while others lead to breakdown.

You can already see the foundation of this in Aristotle. He didn’t use the term “natural law,” but the structure is there. Human beings have a nature, and flourishing comes from living in alignment with it—not whatever we happen to want in the moment, but a way of life shaped by discipline, balance, and the cultivation of virtue over time.

The Stoics make this more explicit. They describe the world as ordered by reason—logos—and argue that human beings can come to understand that order.

From that perspective, moral truth isn’t something we invent. It’s something we discover. And law, at its best, should reflect that underlying structure rather than contradict it.

By the time you get to Rome, this idea is articulated more directly. Cicero describes a true law grounded in right reason and in agreement with nature—something universal, not dependent on custom or preference, but rooted in reality itself.

These ideas don’t disappear. They are carried forward and developed.

Christian thinkers later absorb and expand them, especially through Thomas Aquinas, who integrates Greek philosophy and Roman legal thought into a more explicit framework of natural law. And that influence is real. It’s part of the Western story whether we like it or not.

But that’s not the point of this piece.

What matters here is that by the time you reach the early modern period, this idea of a structured moral order—something that places limits on behavior and grounds freedom in discipline—is already well established.

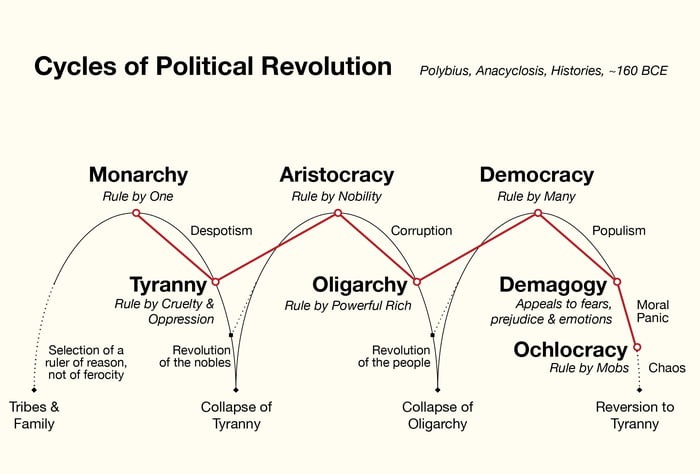

You can see that continuity clearly in how the Founders and colonists read earlier political thought. Returning to those earlier sources, Plato describes how political systems degrade over time, arguing that excessive and undisciplined freedom can produce disorder, which eventually leads people to accept tyranny in the search for stability. Aristotle traces how democracies collapse when law gives way to persuasion and personality. Polybius maps the recurring cycle through which governments rise and decay.

What he described was called anacyclosis, a recurring cycle of political systems. Governments begin in relatively stable forms, rule by one, by a few, or by many, but over time they degrade. Kingship becomes tyranny. Aristocracy becomes oligarchy. Democracy, when it loses discipline, collapses into what he called ochlocracy, rule by the mob.

This wasn’t abstract to the colonists, like I said, they believed they were watching this pattern unfold in real time. And it shows up just as clearly in the political language of the founding era itself.

As Bailyn explains, monarchy, aristocracy, and democracy were each seen as capable of producing human happiness. But left unchecked, each would inevitably collapse into its corrupt form: tyranny, oligarchy, or mob rule.

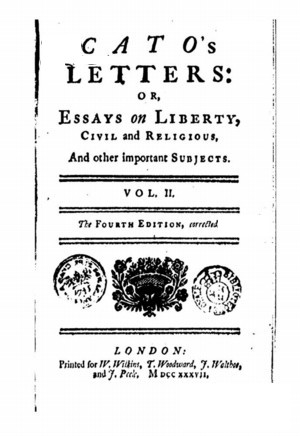

Writings like Cato’s Letters were widely read in the colonies and helped shape how ordinary people understood government, power, and liberty.

What’s striking when you read Cato more closely is how little confidence they placed in moral restraint alone. It doesn’t describe freedom as unlimited expression or personal autonomy. The idea that belief, fear of God, or good intentions would keep power in check is treated as dangerously naive. Power is not self-regulating, and it is not made safe by the character or beliefs of those who hold it. It has to be exposed, limited, and actively resisted—because even institutions and ideas meant to restrain it, including religion, can be repurposed to justify its expansion.

It describes government more as a trust—one that exists to protect the conditions that make ordinary life possible.

As Cato writes:

“Power is like fire; it warms, it burns, it destroys. It is a dangerous servant and a fearful master”

And more directly:

“What is government, but a trust committed…that everyone may, with the more security, attend upon his own?”

The assumption is clear. Power must be restrained. Freedom depends on it.

But in Cato’s framing, that restraint doesn’t come from structure alone. It depends on constant exposure and resistance. Freedom of speech and a free press aren’t treated as abstract rights, but as active safeguards—tools for uncovering corruption and preventing power from consolidating unchecked. The logic is simple but demanding: power does not correct itself. It expands, protects its own interests, and, if left unchallenged, begins to operate beyond the limits it was given.

The point of understanding the political cycles of revolution wasn’t to say that any one system was uniquely flawed. It was that all systems are vulnerable to the same underlying problem:

Human nature.

Self-interest eventually creeps in. Restraint erodes. Power shifts from a trust into something personal and extractive.

And once that shift happens, the form of government matters less than the character of the people within it. That thread runs directly into the founding.

The American system wasn’t designed as a pure democracy. It was an attempt to stabilize a problem earlier thinkers had already identified.

Rather than choosing a single form of government, the founders built a mixed system, blending elements of rule by one, rule by a few, and rule by many. An executive to act with decisiveness. A Senate to provide deliberation and continuity. A House to represent the people more directly.

This wasn’t accidental.

It reflected an awareness that each form of government carries its own risks, and that concentrating power in any one place tends to accelerate its corruption.

By distributing power across different institutions, the goal was to create tension within the system itself. Ambition would check ambition. Competing interests would slow the consolidation of power.

From my understanding, they weren’t trying to escape the cycle Polybius described. They were trying to manage it.

They weren’t designing a perfect system. They were attempting to design one built to withstand imperfect people.

But even that depended on something it could not guarantee.

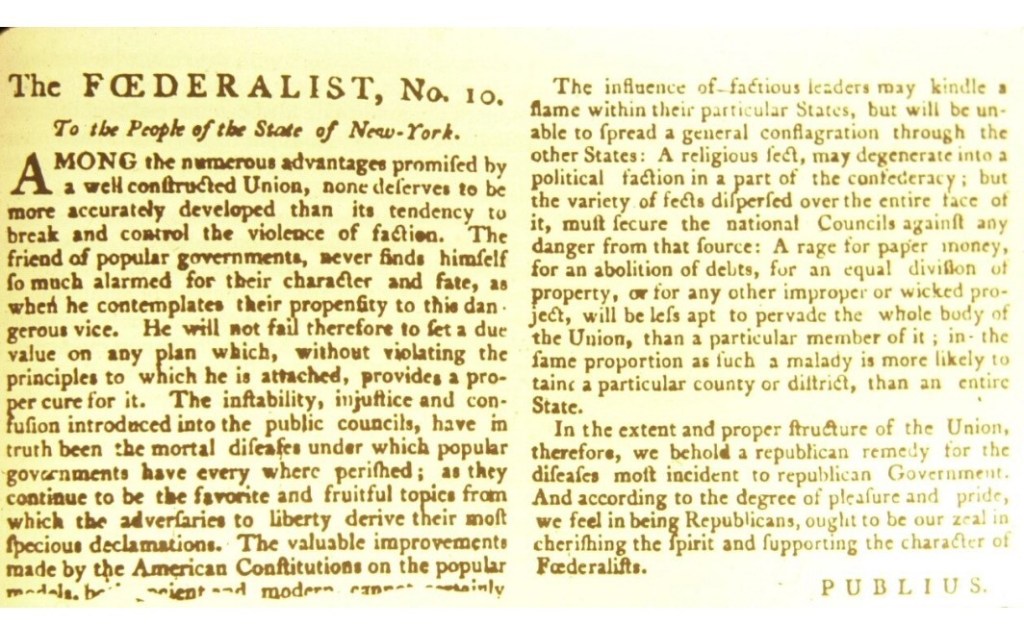

In Federalist No. 10, James Madison writes:

“The latent causes of faction are thus sown in the nature of man.”

He’s not describing a temporary problem. He’s describing a permanent one.

Differences in opinion, interests, wealth, and temperament don’t disappear. They organize. They form groups. And those groups will sometimes pursue aims that are at odds with the rights of others or the stability of the system itself.

Madison’s conclusion is straightforward:

“The causes of faction cannot be removed… relief is only to be sought in the means of controlling its effects.”

That distinction is crucial. He doesn’t try to eliminate conflict or force unity. He assumes conflict is inevitable and builds a system around that reality.

Instead of requiring perfect discipline from individuals, the structure disperses power, multiplies interests, and forces negotiation. Representation slows decision-making. Scale makes domination more difficult.

Freedom is preserved not by removing conflict, but by structuring it.

“They looked ahead with anxiety, not confidence. Because they believed liberty was collapsing everywhere. New tyrannies had spread like plagues. The world had become, in their words, “a slaughterhouse.” Across the globe: Rulers of the East were almost universally absolute tyrants…Africa was described as scenes of tyranny, barbarism, confusion and violence. France ruled by arbitrary authority. Prussia under absolute government. Sweden and Denmark had “sold their liberties.” Rome burdened by civil and religious control. Germany is a hundred-headed hydra. Poland consumed by chaos. Only Britain (and the colonies) were believed to still hold onto liberty. And even there… barely.” From revolutionary-era political writings, as compiled by Bernard Bailyn

II. Ordered Liberty and the Kind of Person It Requires

The founders believed in liberty, but not as an unlimited good. They believed in ordered liberty. Freedom that exists within a framework of responsibility, discipline, and civic virtue. The system they designed assumed a certain kind of person, one capable of self-governance, restraint, and participation in a shared moral world.

That assumption was not optional. It was structural. It’s easy to miss how much is built into that.

And this is where the modern tension and the current understanding of freedom begins to diverge from its origins.

Classical Liberalism, in its earlier form, was not about as Deneen states in Why Liberalism Failed, detaching individuals from all institutions, identities, or relationships. But it was about protecting individuals from tyranny while preserving the conditions necessary for a functioning society. It assumed the continued existence of family, community, religious frameworks, and shared norms.

But where Deneen is right, early liberal thought did introduce something new.

John Locke, for example, reframed institutions like marriage as voluntary associations rather than fixed, inherited structures. That didn’t mean early liberal political philosophy was designed to erode the family. But it did change how those institutions were understood. It placed individual choice alongside social stability in a way that could be expanded over time.

To understand where this expansion comes from, you have to look at what came before it

Without freedom of thought, there can be no such thing as wisdom; and no such thing as publick liberty, without freedom of speech: Which is the right of every man, as far as by it he does not hurt and control the right of another; and this is the only check which it ought to suffer, the only bounds which it ought to know. Cato’s letters No.15

III. The Moral Inheritance of the West

In many pre-Christian societies, moral life wasn’t organized primarily around abstract rules or universal doctrines, but around continuity. Identity was tied to lineage, family, and inherited roles. Authority came not from individual preference, but from what had been passed down—customs, obligations, and expectations shaped over generations. To live well wasn’t just a personal project. It meant upholding something larger than yourself: maintaining the reputation of your family, fulfilling your role within a community, and carrying forward a way of life that you didn’t create but were responsible for preserving.

You can see how this played out in places like Anglo-Saxon England, where social structure and legal life were more embedded in family and local custom than in centralized doctrine. Women, for example, could own property, inherit land, appear in legal proceedings, and in some cases exercise real economic and political influence. These weren’t modern equality frameworks, but they complicate the assumption that agency and rights only emerge through later “progress.”

That structure did more than organize society. It created cohesion. It gave people a shared reference point for what mattered, what was expected, and what should be restrained—even when no one was watching. Authority wasn’t something constantly renegotiated. It was inherited, lived, and reinforced through participation in a shared way of life.

Greek and Roman life was also structured around civic duty, hierarchy, and inherited roles.

Their moral frameworks reflected that structure. Thinkers like Aristotle emphasized virtue as balance, habits cultivated over time within a community, oriented toward harmony and the common good.

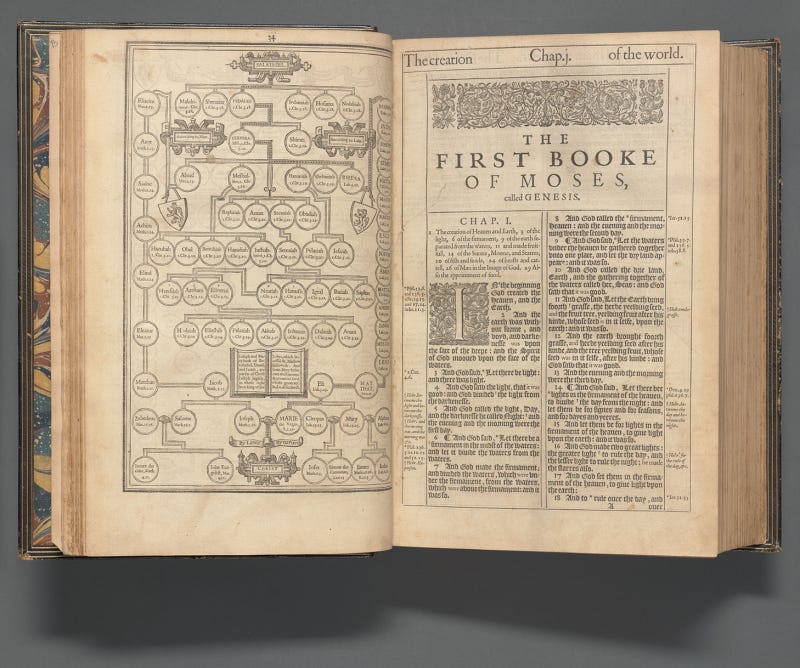

As Christianity spread, moral authority became less tied to lineage and local custom, and more anchored in universal doctrine—rules that applied across communities, not just within them. Obligation didn’t vanish, but it was increasingly reframed. Less about inherited roles within a specific people, more about the individual’s relationship to a broader moral order.

That shift didn’t happen all at once, and it’s not a simple story. The development of early Christianity, its integration into the Roman Empire, and the ways it reshaped intellectual life and authority are far more complex than a few paragraphs can capture here. I’ve gone into that in more detail elsewhere, particularly around the Constantinian period and the rise of revelation and fall of reason.

This development intensifies further with the rise of Protestantism, where that reframing of obligation becomes even more explicit.The movement from the Seven Deadly Sins to the Ten Commandments as a dominant moral framework.

Pieter van der Heyden Netherlandish

After Pieter Bruegel the Elder Netherlandish

Publisher Hieronymus Cock Netherlandish

1558

The Seven Deadly Sins, pride, greed, lust, envy, gluttony, wrath, and sloth, are not rules in the strict sense. They describe internal dispositions, patterns of character that distort judgment and pull a person out of balance. They are concerned with formation, with who you are becoming.

The Ten Commandments, by contrast, are structured as prohibitions. You shall not. They define boundaries, obedience, and transgression in relation to divine authority.

Both frameworks aim at moral order. But they operate differently. One is oriented toward the cultivation of character within a shared moral world. The other emphasizes compliance, law, and accountability before God.

The Protestant Reformation further reduced the role of mediating institutions, emphasizing personal conscience, direct access to scripture, and an individual relationship to truth. Authority became less external and more internalized, but also more individualized and less uniformly shared.

The emphasis is unmistakable. Moral responsibility is no longer primarily inherited or communal, but individual and direct.

This did not dissolve the community. But it did begin to relocate the moral center of gravity, from the maintenance of balance within a community, to the accountability of the individual before God.

A political system built on individual rights and self-governance emerged from a cultural framework that had already begun to center moral responsibility at the level of the individual.

At the same time, Christianity reshaped how the natural world was understood. Earlier traditions often treated nature as infused with meaning, order, or even divinity. Christianity maintained that the world was ordered, but no longer sacred in itself. It was created, not divine.

That distinction introduced a kind of distance. A world that is no longer sacred in itself becomes, over time, easier to treat as something external, something to study, measure, and ultimately use.

None of these shifts were inherently destabilizing on their own. But they altered the underlying framework.

Over time, they contributed to a gradual reorientation, one that made it easier to conceive of the individual as separate, autonomous, and capable of standing apart from inherited structures.

That development would later be expanded and amplified through liberal thought.

But the point is not that Protestant Christianity caused modern individualism. It is that it helped make it thinkable.

By the time you reach the Enlightenment and the American founding, those earlier shifts had not disappeared. They had been carried forward and reworked into a new framework—one increasingly shaped by reason, not as a rejection of religion entirely, but as a refusal to let authority go unquestioned simply because it claims moral or divine legitimacy.

The state of nature has a law of nature to govern it, which obliges every one: and reason, which is that law, teaches all mankind, who will but consult it, that being all equal and independent, no one ought to harm another in his life, health, liberty, or possessions… (and) when his own preservation comes not in competition, ought he, as much as he can, to preserve the rest of mankind, and may not, unless it be to do justice on an offender, take away, or impair the life, or what tends to the preservation of the life, the liberty, health, limb, or goods of another.

-John Locke on the rights to life, liberty, and property of ourselves and others

IV. When Freedom Loses Its Structure

Over the next two centuries, that framework continued to expand. Early expansions focused on political participation—who could vote, who counted as a citizen, and who could take part in public life.

By the mid-20th century, that expansion accelerated through civil rights movements, which pushed the language of equality and access further into law, culture, and institutions.

In the 1960s into the 1970s, the focus widened into personal life. Questions of family, marriage, sexuality, and individual identity were increasingly reframed in terms of autonomy and personal choice.

The sexual revolution, in particular, was widely understood as an expansion of personal freedom: loosening traditional constraints around sex, marriage, and family life. But over time, some of the assumptions underlying that shift have come under renewed scrutiny. The idea that women can navigate complete sexual and relational autonomy without significant cost appears increasingly fragile, especially in the absence of the social structures that once provided stability and direction.

Expanding rights changes the system, not just access to it.

What’s often assumed is that this expansion is self-justifying—that extending rights is always a net good, and that the system can absorb that expansion without consequence. But that assumption is rarely examined.

As the scope of participation widens, so does the demand placed on the system and on the people within it.

A political system built on equal participation assumes a level of judgment, responsibility, and long-term thinking that is not evenly distributed. It assumes that individuals, given more freedom, will be able to navigate it without undermining the conditions that make it possible in the first place.

What we also see in modern times is the cultural and institutional structures that once shaped behavior—family expectations, community standards, shared moral frameworks have become much weaker, more contested, or easier to reject.

For most of known human history, moral behavior wasn’t just a matter of personal conviction. It was embedded in small, stable, reputation-based communities where actions were visible, remembered, and judged over time. Behavior carried consequences because it was tied to relationships that endured.

That community system relied on three conditions: shared standards, stable enforcement, and long-term relationships. As those weaken, accountability becomes less consistent or non existent. Not because human nature has changed, but because the structures that made behavior visible and tied to consequences have broken down.

Part of that shift is tied to the broader move toward secularism. As religious frameworks lose authority, the shared narratives that once provided cohesion, meaning, and moral orientation begin to fragment. This doesn’t eliminate the human need for structure—it shifts where people look for it. It disperses into competing sources of identity, morality, and meaning.

In The Republic, Plato makes a similar observation about belief itself. What matters is not just what people claim to believe, but whether those beliefs hold under pressure. “We must test them… to see whether they will hold to their convictions when they are subjected to fear, pleasure, or pain.”

Without shared structures reinforcing those convictions, belief becomes more reactive, more situational, and more easily reshaped by external forces.

We are left with a society of multiple, incompatible systems of belief—each with its own values, demands, and claims to legitimacy, but no widely accepted structure holding them together.

What was once a shared moral world becomes a contested one.

In Propaganda, Edward Bernays makes a blunt observation: the conscious and intelligent manipulation of the masses is not only possible, but essential to managing modern society. That insight becomes more relevant, not less, in the absence of a shared framework.

Because when a society loses the unifying structures that once held it together, the vacuum doesn’t stay empty. New ideologies rush in (secular, political, cultural) offering belonging, morality, and meaning, often with more intensity than the systems they replaced.

More autonomy. Less formation. More fragmentation. Less agreement on what freedom even demands.

This raises a harder question: whether removing earlier constraints produced the kind of freedom it promised, or simply replaced one set of pressures with another.

As that imbalance deepens, people don’t simply become more independent. They look for stability elsewhere.

This is where Deneen’s observation becomes useful, even if I don’t fully agree with his framing. As traditional institutions weaken, dependence doesn’t disappear—it shifts. From local, relational structures to larger, more abstract systems like the state and the market.

Another way to see this is that societies don’t just rely on formal institutions. They rely on something less visible—a kind of cultural immune system. Shared norms, expectations, and informal boundaries that regulate behavior without constant enforcement.

When those weaken, systems don’t become freer. They become easier to exploit.

One of the clearest examples of that vulnerability is the modern corporation.

The American system was designed in deep suspicion of concentrated power, yet over time it has extended expansive protections to corporate entities, allowing large institutions, backed by wealth, media, and legal abstraction, to shape public life in ways the founding framework was poorly equipped to restrain.

The founders were wary of concentrated power, but they were not designing a system for multinational corporations with vast economic and informational reach. Over time, constitutional doctrine expanded in ways that made these entities increasingly difficult to limit, culminating in decisions like Citizens United, where the Court held that independent political spending by corporations and unions could not be restricted under the First Amendment.

This is part of the same pattern. A system built to preserve liberty becomes easier to exploit when power no longer appears as a king, a church, or a visible ruling class, but as diffuse institutions operating through law, markets, and media.

And as we have seen that happen, trust has eroded, cooperation breaks down, and the very conditions that made freedom possible have begun to unravel.

But I don’t think that was the original aim of classical liberalism.

It’s not that it set out to dismantle the community. It’s that over time, through cultural, economic, and technological changes, the balance between freedom and structure eroded. And now we’re dealing with the consequences of that imbalance.

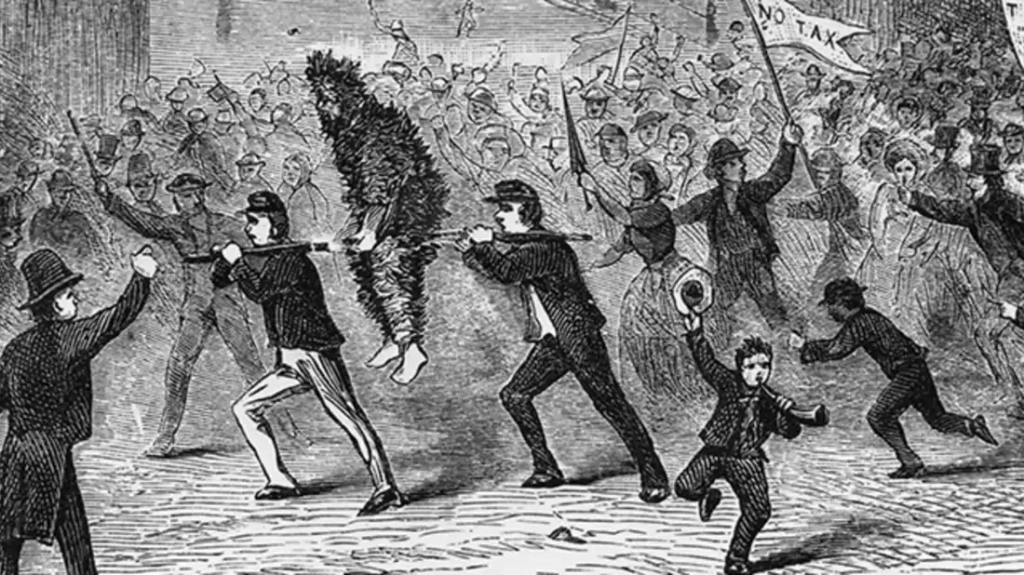

The more I read, the harder it is to ignore the tension at the heart of the American Revolution itself.

It speaks the language of liberty, but often operated through pressure, surveillance, and social enforcement. Groups like the Sons of Liberty didn’t just resist authority—they replaced it with their own forms of coercion, loyalty tests, and public punishment.

I am not saying the ideals were wrong. It means liberty, on its own, doesn’t sustain itself.When formal authority is rejected, power doesn’t disappear. It simply relocates.

And without shared discipline or internal restraint, it often reappears in more fragmented, less accountable forms.

Liberty is not the absence of power.

It’s a problem of how power is structured, restrained, and lived.

There’s another reaction to this tension that’s worth acknowledging, even if it goes too far.

Thinkers like Mencken argued that the real problem isn’t the system, but the people—that democracy inevitably lowers the standard because it reflects the average citizen.

And I understand the sentiment; but that framing misses something important.

The issue isn’t that people are inherently incapable of self-government.

It’s that self-government requires habits, discipline, and formation that a system alone cannot produce.

What makes this moment particularly interesting is that the unease people feel doesn’t map neatly onto political categories.

Across both the left and the right, there’s a growing intuition that something isn’t functioning the way it should.

You see it in the rare points of agreement. Public frustration over the lack of transparency in the Epstein files cuts across political lines, with overwhelming majorities convinced that key information is still being withheld and justice is yet to be served.

You see it in foreign policy as well. Even in a deeply divided country, there is broad skepticism toward escalating conflicts like the war involving Iran, with many of us questioning the purpose, cost, and direction of involvement.

That concern isn’t new. It shows up clearly in Cato’s Letters, where distrust of power wasn’t abstract—it was grounded in history. The Roman Empire was a constant reference point, especially in how standing armies, once established, could be turned inward, gradually eroding liberty and consolidating control.

They weren’t against defense. But they were deeply wary of permanent military power and foreign entanglements that primarily served those in control, not the public. War wasn’t just protection. It was one of the fastest ways power could expand.

And it’s hard not to wonder how they would look at what we now call the military-industrial complex—how permanent it’s become, how embedded it is, and how easily it justifies its own expansion.

Power attracts interests that seek to influence it through money, proximity, and favor and over time those interests become embedded within the system itself, shaping decisions in ways that are no longer aligned with the public.

How this shows up today in modern times points to the fact that governmental power no longer feels like a trust. We The People who want to put America and her people’s needs First, are witnessing an occupied government like never before. And that our institutions are no longer held accountable. They have become self-protective and disconnected from the very people they’re meant to serve.

“Power, in proportion to its extent, is ever prone to wantonness.” — Josiah Quincy Jr., Observations on the Boston Port-Bill (1774)

“The supreme power is ever possessed by those who have arms in their hands.” (colonial political writing, mid-18th century)

Standing armies, they warned, could become “the means, in the hands of a wicked and oppressive sovereign, of overturning the constitution… and establishing the most intolerable despotism.” — Simeon Howard, sermon (c. 1773–1775)

Which is why Jefferson insisted on keeping “the military… subject to the civil power,” not the other way around (1774).

There’s also empirical evidence from over a decade ago pointing in that direction.

Sometimes known as “the oligarchy study” published in 2014 by Martin Gilens and Benjamin Page analyzed nearly 1,800 policy decisions in the United States and found that economic elites and organized business interests have a substantial independent influence on policy outcomes, while average citizens have little to no independent impact.

Policies favored by the majority tend to pass only when they align with the preferences of the wealthy. When they don’t, public opinion has almost no measurable effect.

This one study doesn’t prove that the system has fully collapsed into oligarchy.

But it does reinforce our intuition that something has shifted, that power is no longer functioning as it should and that representation is much more limited than we assume.

What I’ve learned from putting this together is that this concern is not new. It’s ancient.

It’s the same fear that appears in the Greek philosophers, carries through Rome, reemerges in the founding era, and is now unfolding again in modern society.

This is the same dynamic Madison was pointing to in Federalist No. 10. When legitimacy starts to weaken, people don’t simply disengage.

They form groups around competing explanations for what’s gone wrong—different interests, different priorities, different visions of what should replace it.

Within the modern left, those responses are not all the same.

Establishment Democrats still operate within existing systems. Liberals tend to push for reform through policy. Progressives begin to question the structure itself. And further out, democratic socialists and revolutionary groups are not aiming to fix the system, but to replace it entirely.

That distinction matters. Because once you move from reform to replacement, you’re no longer arguing about how to use a system.

You’re arguing about whether it should exist at all. At the far end of that spectrum, some movements push toward dismantling foundational structures entirely, treating them as irredeemably corrupt.

You can see this in specific, coordinated efforts.

Large-scale protest movements like the recent “No Kings” demonstrations, like on March 28th, 2026, bringing 8 million people into the streets across the United States. With more than 3,300 coordinated events spanning all 50 states, the mobilization set a record for the largest single day of protest in U.S. history.

They have planned actions like May Day strikes, where activists are calling for mass labor disruption and economic shutdown. And organized noncooperation campaigns designed to train people in how to resist, overwhelm, or halt existing systems altogether.

Their logic is that the system of capitalism is no longer seen as something to work within, but something to resist, bypass, or bring to a stop.

Not reform. But disruption and replacement.

I’ve spent enough time around these spaces to understand the appeal. When institutions feel captured or unresponsive, the instinct is not to reform them—but to burn them down to the ground.

Freedom is not collapsing because people have rejected it. It’s becoming unstable because we can no longer agree on what it is, what it requires, or what its limits should be.

And as more of the burden falls on individuals while leadership fails to model it, people start to feel both responsible and powerless. And that’s where apathy begins to take hold——when it no longer feels like it matters, especially to the people at the top.

V. The Human Problem at the Center of Freedom

A republic doesn’t survive on laws alone.

It survives on citizens who can exercise restraint, who understand limits, who see freedom not just as permission, but as responsibility.

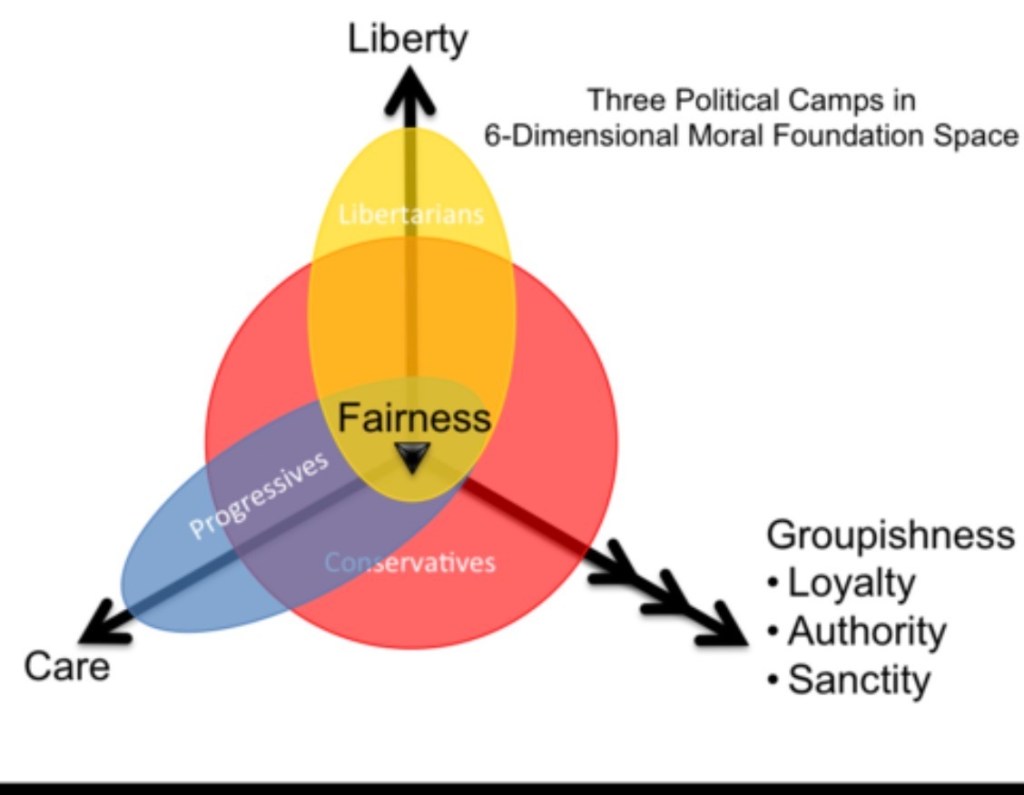

One way to understand this shift more clearly is through moral psychology. Human beings don’t arrive at morality purely through reasoning. We rely on a set of underlying intuitions (care, fairness, loyalty, authority, and a sense of the sacred) that shape how we judge right and wrong before we ever explain why.

In more conservative or traditional societies, these moral intuitions tend to operate together rather than in isolation. Care, fairness, loyalty, authority, and a sense of the sacred reinforce one another, creating a more unified moral framework. People may still disagree, but they are drawing from a shared moral language, with expectations around family, roles, restraint, and what should or should not be done.

But that kind of shared moral framework doesn’t hold evenly across modern society.

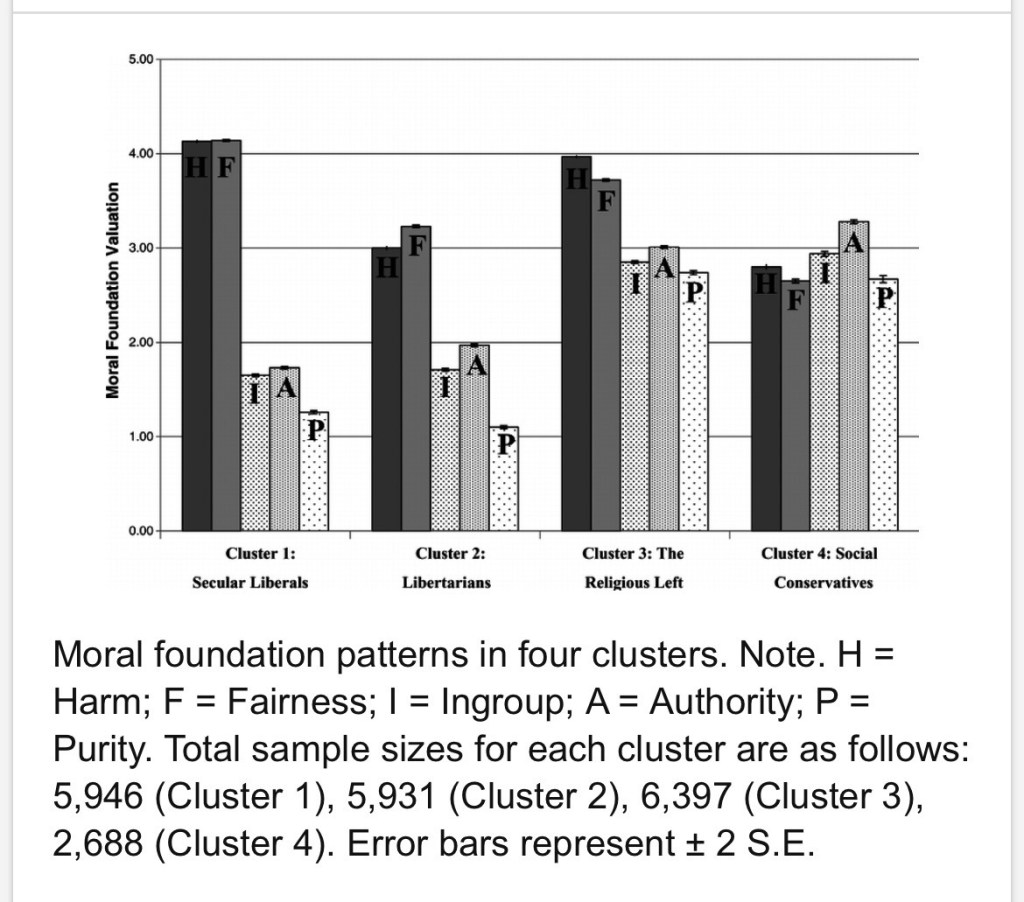

The second way to see this is by looking at how these moral intuitions organize into distinct patterns cluster across different groups. In the chart, you can see three broad orientations: progressives, conservatives, and libertarians. Progressives tend to cluster around care and fairness. Conservatives draw from a wider range, incorporating loyalty, authority, and a sense of the sacred alongside those concerns. Libertarians center heavily on liberty, placing less weight on the others. What looks like a disagreement about politics is often a difference in moral orientation—people emphasizing entirely different parts of the same moral landscape.

And the differences don’t just show up in orientation, but in intensity.

This bar graph illustrates this pattern more clearly when we look at how different groups actually prioritize these moral intuitions.

Secular liberals and the religious left tend to emphasize care and fairness most strongly, focusing on reducing harm and promoting equality. By contrast, more traditional or socially conservative groups draw more evenly across a broader set of values, including loyalty, authority, and a sense of the sacred alongside care and fairness. Libertarians tend to narrow even further, prioritizing individual liberty while placing less emphasis on collective or traditional moral structures.

The result isn’t just disagreement over morality—it’s a difference in what people are even measuring in the first place, which makes shared judgment harder to sustain.

You can see the split in how people respond to the same breakdown in trust.

For those on the left, freedom means removing constraint entirely and that leads to a push to dismantle systems they see as corrupt or oppressive.

For those on the right, it produces deep suspicion: distrust of elections, media, public health authority, and government itself, along with a desire to restore order, stability, and clearer boundaries. In some cases, that turns into nostalgia for earlier structures: family roles, gender norms, and forms of religious authority that are seen as more stable, even if that restoration comes with its own trade-offs.

These aren’t just different political positions.

They reflect different instincts about what matters most and different assumptions about what freedom is for.

And both risk missing the deeper question.

Not just: what system creates freedom?

But what kind of people can sustain it?

This is where Aristotle’s framework becomes difficult to ignore. In that sense, his may be closer to the truth than many modern assumptions. It starts from the premise that people are not equal in their capacity for judgment or self-governance—and builds from there, rather than pretending those differences don’t matter.

It shows up in how people live, how they make decisions, and how they exercise restraint. That’s where his framework of virtue comes in—not as an ideal, but as a way of describing what it actually takes to live well and participate in a functioning society.

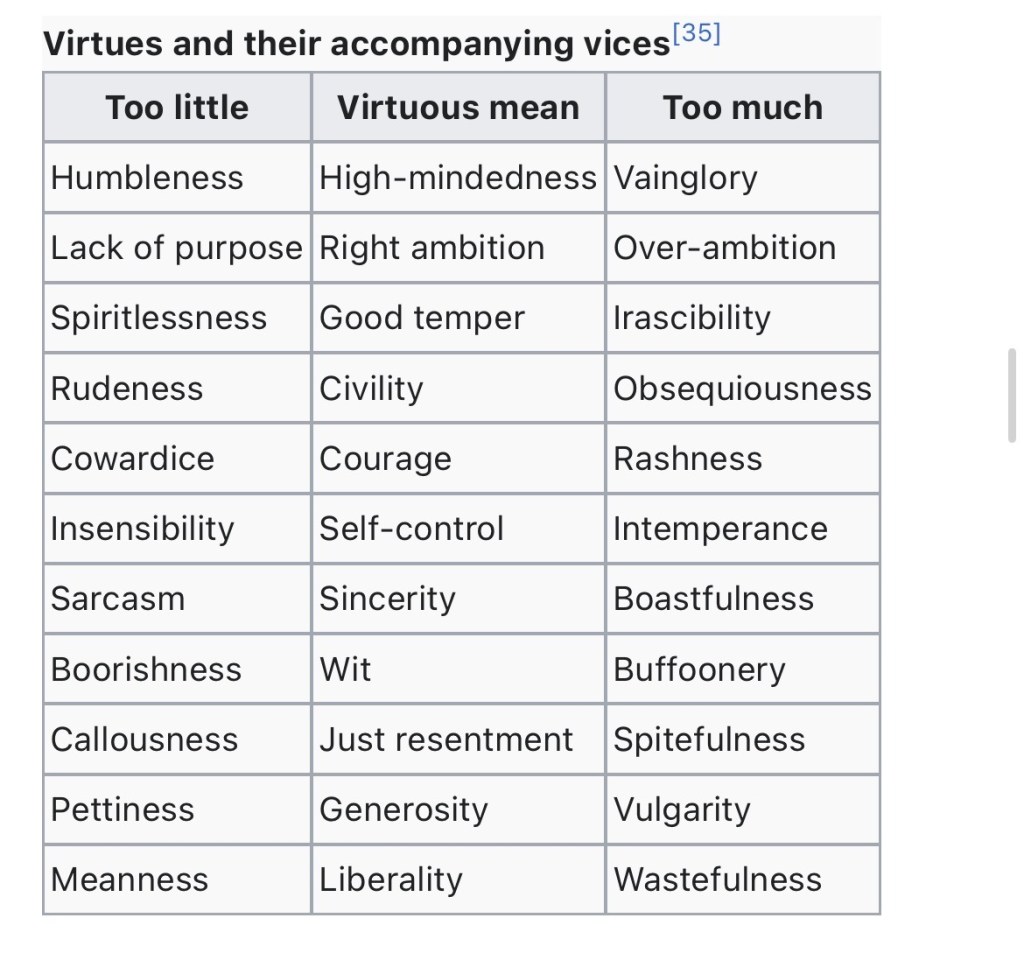

He didn’t think virtue was about perfection. He thought of it as balance. Courage sits between cowardice and recklessness.

Self-control between indulgence and insensibility.

Generosity between stinginess and excess.

Virtue is not automatic. It is cultivated. And it can be lost.

He applied that same logic to political systems. A government can exist in a healthy form, oriented toward the common good, or in a corrupted form, serving only a faction. At that point, the difference isn’t just structural. It comes down to character.

One tension that keeps resurfacing in political thought is the gap between equality in principle and inequality in capacity.

You can see this play out in small, everyday ways. Give ten people the same freedom, the same opportunity, the same set of rules—and you don’t get the same outcomes. Some plan ahead. Some act impulsively. Some take responsibility. Others look for ways around it. The structure is equal, but the response isn’t.

Because human beings are not identical in judgment, discipline, or temperament. Some are more capable of long-term thinking, self-restraint, and navigating complexity than others.

A free society doesn’t eliminate those differences. It has to operate in spite of them. And that creates the real challenge.

A system built on self-government depends on habits it cannot enforce, on restraint it cannot require, and on a shared understanding of limits it cannot guarantee.

Which raises a difficult question:

What happens when a system built on equal freedom depends on unequal capacities to sustain it?

Freedom is not self-sustaining. The more we treat it like it is, the more fragile it becomes.

When those conditions weaken, the structure doesn’t collapse all at once. It loosens, then drifts, and eventually begins to follow the same pattern that earlier thinkers warned about.

Not because the idea of freedom was flawed, but because it was always contingent on something more demanding than we like to admit.

And that’s what makes the older warnings so difficult to ignore. The concerns that show up in Greek philosophy, carry through Rome, and reappear in the founding era weren’t tied to one moment in history. They’re describing something recurring. Power doesn’t stay put. It accumulates. It protects itself. And without pressure against it, it shifts (often quietly) into something more self-serving than it was at the start.

The documents and letters from the founding era weren’t written for a stable world. They were written by people who assumed this drift was inevitable. That’s why they were obsessed over things like faction, corruption, and the abuse of power. Not just as political problems, but as moral ones. Because once corruption sets in, it doesn’t just distort institutions. It reshapes the people within them. A corrupt government cannot be a just government. That’s why they treated free speech, free press and an informed public less like ideals and more like important tools—ways of forcing power into the open before it had the chance to consolidate.

Cato’s letters, in particular, were relentless on this point. They knew that a society that becomes consumed with wealth, status, and self-interest doesn’t just become unequal. It becomes easier to manipulate, easier to divide, and eventually less capable of governing itself at all. Civic virtue wasn’t a side note. It was the condition that made freedom possible in the first place.

And when you look at it from that angle, it doesn’t feel like you’re reading writings from the 18th century. It feels familiar, much closer to home.

Of course, the scale is different now. The mechanisms are different. But the tension is very much the same. Governments and corporations operate with a level of reach the founders never could have imagined with technology. Information is filtered, behavior is shaped, and power often moves through systems that don’t look like power at all. You don’t always see it directly. But you feel the effects of it.

So the responsibility doesn’t go away. It never did.

If anything, it becomes less obvious and more necessary at the same time.

A system like this doesn’t hold because it was designed well. It holds, when it does, because enough people are still paying attention. Still pushing back. Still unwilling to let power define its own limits.

And once that slips…once that expectation fades, the structure doesn’t fail all at once. It just stops holding in the way it used to. And the pattern continues.

Resources:

This piece pulls from a mix of ancient sources, founding-era writing, and modern critiques. Not because I agree with all of them, but because each one sharpens a different part of the problem. If you want to work through it yourself, these are the ones that shaped how I’m thinking about it:

Bernard Bailyn — The Ideological Origins of the American Revolution

Less about what the founders built, more about what they were reacting to—especially the collapse of earlier republics.

Alexander Hamilton, James Madison, John Jay — The Federalist Papers

A direct look at how they thought about human nature, power, and why freedom needs structure to hold.

Patrick Deneen — Why Liberalism Failed

I don’t agree with all of it, but the critique of modern individualism and the erosion of shared norms is worth taking seriously.

Plato — The Republic

Still one of the clearest descriptions of how excessive freedom destabilizes a society.

Aristotle — Politics

Helpful for understanding how democracies drift when law loses authority and personality takes over.

Polybius — Histories

His framework for how governments rise and decay is hard to unsee once you see it.

Louise Perry — The Case Against the Sexual Revolution

A modern example of how expanded freedom doesn’t always produce the outcomes people expect.

Jonathan Haidt — The Righteous Mind

Useful for understanding why reason alone doesn’t hold societies together—and why people experience morality so differently.

Charles Freeman — The Closing of the Western Mind

Explores how early Christianity reshaped intellectual life in the West.

Also recommended: The Opening of the Western Mind

Roger E. Olson — The Story of Christian Theology

A clear overview of how Christian thought developed over time and how its internal tensions evolved.

Judith Bennett — Women in the Medieval English Countryside

Insight into everyday life, structure, and roles in pre-modern society.

Christine Fell — Women in Anglo-Saxon England

A look at social organization and cultural norms in early English society.